Stupid Machines: a UX design technique

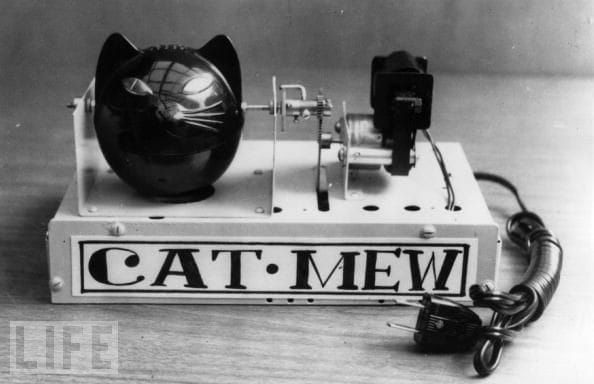

Consider the following stupid machines:

- machine that runs into corners at high speed and yelps upon impact

- goes up against a person’s leg, looks up at them, and smirks

- same as above, but sprays water at a leg

- accelerates towards raised edges and falls off

- machine composed of two halves that repeatedly pull themselves apart, in opposite directions

- machine that continuously falls apart while you assemble it

- machine that topples itself onto its back, repeatedly

- machine that develops boils, which expand and finally burst

- machine that freaks out on hearing a particular word or phrase, which it won’t tell you

- interrupts you, claiming you said its name when you didn’t, and ignores you when you actually do

- machine that confesses to a murder

- machine that gets depressed and stops functioning, occasionally committing suicide

- machine that runs toward shiny objects, cuddles them saying “my precious”, develops a split personality, rejects the object, and repeats

- when you point a light at it, runs away screaming “my eyes! my eyes!”

- same as #2, but runs away screaming “my eyes! my eyes!”

- machine that never finishes sentences, constantly starting new ones

- machine that creeps up behind you and peers over your shoulder

- says sad things in a happy, sing-song voice

- machine that cross-dresses as another kind of machine

- machine that asks questions but doesn’t wait to hear your response

Note that these machines all exhibit:

- Intentionality,

- Planning ability,

- Selective action,

so technically they are intelligent compared to most current technology.

So why do many of these remind one of how technologies currently behave? Many user experiences are tone-deaf, constantly inconsistent or unresponsive, rude, clueless, and often plain inhuman.

I propose a new design technique: making Stupid Machines as a way to clarify design intent and set boundaries. There is some precedent to this:

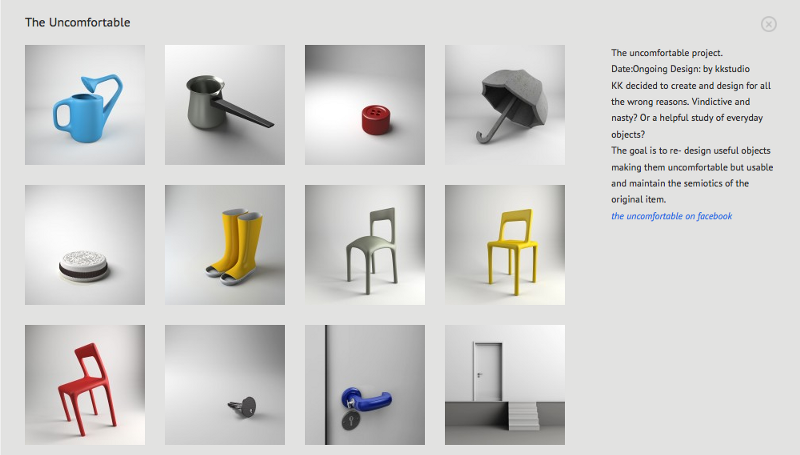

"The Uncomfortable", a project by Katerina Kamprani

"The Uncomfortable", a project by Katerina Kamprani

All of these objects have one small perversion that frustrates their original design intent: there is something promised, but disappointment ensues on actual attempts to use.

I’m suggesting something slightly different: to imagine objects that are not just functionally disappointing, but actively emotionally and psychologically disturbing.

This is useful when you are not sure what will make for a good experience; in such cases, it is still possible to imagine various flavours of bad, terrible and annoying experiences. Once you remove the ‘obvious’ instances – those that are usually attributable to implementation errors – what you are left with is a nuanced understanding of what the experience should not be.

Stupid machines is not about emotional design – the explicit attempt to engender emotions in the user. That’s a fine endeavour, but if you’ve reflected on any relationships at all, attempting to make people happy based on your notions of happiness and value is only half the story. The other half is understanding what people are experiencing.

This matters to UX because people ascribe psychology and agency to computers even when the technology is programmed with no such capabilities.

(For the seminal work, see: Nass, Clifford, Jonathan Steuer, and Ellen R. Tauber. 1994. “Computers Are Social Actors.” Pp. 72–78 in Proceedings of the SIGCHI Conference on Human Factors in Computing Systems, CHI ’94. New York, NY, USA: ACM. PDF here)

Until technology can perform the complex interpretative work of reading voice, facial expression, language and modeling emotional state, the best we can do in UX is to make sure that what we design is well-mannered and well-raised.

Stupid Machines. Design them, and avoid them completely.